As if you forgot, right? Today, the real next generation in gaming begins, with the release of the NVIDIA GeForce RTX 3080 as the first in the desktop Ampere architecture.

Need a reminder of just how ridiculous and powerful the RTX 3080 is? Here's some specs:

| GEFORCE RTX 3080 | |

|---|---|

| NVIDIA CUDA® Cores | 8704 |

| Boost Clock (GHz) | 1.71 |

| Standard Memory Config | 10 GB GDDR6X |

| Memory Interface Width | 320-bit |

| Ray Tracing Cores | 2nd Generation |

| Tensor Cores | 3rd Generation |

| Maximum GPU Temperature (in C) | 93 |

| Graphics Card Power (W) | 320 |

| Recommended System Power (W) (2) | 750 |

| Supplementary Power Connectors | 2x PCIe 8-pin |

Additional details: they will support the latest Vulkan, OpenGL 4.6, HDMI 2.1, DisplayPort 1.4a, HDCP 2.3, PCI Express 4 and support for the AV1 codec.

Stock is expected to be quite limited, especially since they did no pre-ordering and stores will likely sell out quite quickly. Even so, here's a few places where you might be able to grab one. Some of the sites are under quite a heavy load too due to high traffic, so prepare to wait a bit. I've seen plenty of "website not available" issues today while waiting to get links.

UK

USA

Feel free to comment with more and we can add them in.

Driver Support

Along with the release, NVIDIA also put out a brand new Linux driver with 455.23.04. This is a Beta driver, so there may be some rough edges they still need to iron out. It brings in support for the RTX 3080, RTX 3090 and the MX450.

On top of new GPU support, it also has a bunch of fixes and improvements including support for device-local VkMemoryType, which NVIDIA said can boost performance with DiRT Rally 2.0, DOOM: Eternal and World of Warcraft with DXVK and Steam Play. Red Dead Redemption 2 with Steam Play should also see a bug fix that was causing excessive CPU use.

The VDPAU driver also expanded with support for decoding VP9 10- and 12-bit bitstreams, although it doesn't support 10- and 12-bit video surfaces yet. NVIDIA also updated Base Mosaic support on GeForce to allow a maximum of five simultaneous displays, rather than three. For PRIME users, there's also some great sounding fixes included too so you should see a smoother experience there.

Some bits were removed for SLI too like "SFR", "AFR", and "AA" modes but SLI Mosaic, Base Mosaic, GL_NV_gpu_multicast, and GLX_NV_multigpu_context are still supported. There's also plenty of other bug fixes.

What's next?

Today is only the start, with the RTX 3090 going up on September 24 and the RTX 3070 later in October. There's also been a leak (as always) of a RTX 3060 Ti which is also due to arrive in October. Based on the leak the upcoming RTX 3060 Ti will have 4864 CUDA cores, 8GB GDDR6 (no X) memory clocked at 14Gbps with a memory bandwidth of 447Gbps which means even the 3060 is going to kick-butt.

Are you going for Ampere, sticking with what you have or waiting on the upcoming AMD RDNA 2 announcements? Do let us know in the comments.

In fact, the gaming GPU market is driven by two selling points, atm: 4K 144hz monitors and RTX.

It doesn't look like it. Only small percentage uses 4K at such framerates and RTX (I assume you simply mean ray tracing) is also not a major feature that's used in practice. The bulk of the market is taken by mid range cards or cards aimed at 2560x1440 / 144 Hz segment.

Something like VR on the other hand could be a driver for most high end segment, but VR is also quite a small use case so far.

I.e. most high end cards are surely quite hyped and talked about, but they are not where most money is at least.

You are right. Didn't say the bulk of the market was there. I was referring to the hardware manufacturers that are pushing for 4k 144hz (monitors and hdtvs) and RTX (gpus) to sell their latest innovations (Marketing). Didn't say it was successful... Yet.

Last edited by Mohandevir on 17 Sep 2020 at 10:07 pm UTC

...It's not like there were never such problems with NVidia. The question is, what's the source/reason. I had somewhat similar problems a while ago where the whole X-session and input was frozen (sometimes within hours, sometimes after a few day) and the only thing showing up having a problem / reporting errors was the NVidia driver. They couldn't reproduce it, I couldn't deliver a simple procedure how to create that procedure. The solution in the end was: switch from KDE4 to XFCE and the problem was gone (no driver or other software update). So who's to blame here? NVidia? KDE? Both? The framework(s) that manages their interaction? Hard to say (as most times with modern software). So you shouldn't be too quick to put blame on AMD or whoever. They might have implemented everything according to spec (it's very often NVidia that does some weird implementations), but when lots of packages / programs are starting to interact, things might still go wrong because people interpret specifications differently or they rely on some special behaviour they know from other implementations which actually might not be mentioned in the specs or marked as undefined.

There were other problems too. Computer frozen from using GIMP and Firefox, poor performance in some games, random freezes from desktop with no programs open, 2 monitors not working. I can't remember a time where I had Linux completely freeze and not be able to switch to terminal before getting a 5700XT.

....

I want to agree with you. But for people who dont care about nvidia vs AMD. For $700 in 1.5 months, AMD is not going to go roll out something noticeably faster than the 3080. They might roll something out at $700 but the same speed, or they might roll something out 20% faster than 3080... but costs more.

And at that point, you've just waited 1.5 months to get something that is roughly equal.

The reason it's sensible to wait isn't because you're necessarily expecting AMD to release something that will blow Ampere away.

I don't regret my purchase of a 2080 Ti in the slightest: I've had two years of excellent gaming performance, and I'll likely have several more. But if, at the time, AMD had anything to offer that was in the same ballpark, or even competitive with the 1080 Ti, it would likely have been a darn sight cheaper.

In a couple of months' time, if AMD can show that they're in the game, perhaps Nvidia will slash prices, or perhaps they'll release Ti versions. It's worth waiting even if you're planning to get an Nvidia card.

My main system runs a 2060 Super however in recent months I also bought an RX 5500XT (cheapest Navi card I could get my hands on) to learn more about Mesa, compiling the drivers and living without Nvidia in general.

After my experience, I can say I like AMD better. Mesa has a certain degree of flexibility that Nvidia doesn't offer (such as running multiple drivers if necessary). If I were to go back in time with what I know now, it would be a 5700XT.

TBH I'm not expecting AMD to blow Nvidia out of the water in October, but if performance will be anywhere close at a reasonable price, I'm game for switching out my 2060 S.

Lately I've been playing on my 5500XT system a lot, I haven't encountered any crashes, glitches or otherwise instabilities.

Sorry long read :)

But I 100% don't expect to get a better price to performance deal, only better open source drivers.

I expect that AMD will provide better price / performance combination, but it wouldn't bother me if it won't happen. I'm not going to use Nvidia either way.

If 6800XT is anything like the 5700XT launch:

- October 28th announcement of release November 30th, reference only.

- February 1st AIB cards released.

- March 1st, I can build the kernel and Mesa from git branches to have a somewhat stable experience.

- October 2021 first Ubuntu release that works reliably, April release didn't have everything.

I have bought a few Nvidia cards and they worked with proprietary drivers the week of release with zero issues. I have a 5700XT, the question for me is:

Is there a game that will be released before October 2021 that I will want 2x performance of 5700XT? If there is, I will buy a Nvidia RTX 3080, if not I'll buy a 6800XT most likely.

Games that may make me want to upgrade:

- Cyberpunk 2077

- Vampire the Masquerades: Bloodlines 2 (not looking good)

- Starfield

- New Elder scrolls game

- Avowed

I bought a 5700 XT too and what you described was my experience as well. It was very disappointing. If AMD was as prompt as NVIDIA on drivers, I don't think ANYONE would buy an NVIDIA GPU on Linux at all.

For $700 in 1.5 months, AMD is not going to go roll out something noticeably faster than the 3080.

That is pretty true for the most part. NVIDIA sets pricing, AMD prices their stuff accordingly. There is one generation that I can remember (cant remember which one) that AMD has caused NVIDIA to lower their prices. If 6800XT is performs worse than 3080, then it will cost less, if it performs better, it will cost more. They are always within like $50 for price per performance. At $700, I don't care about +/- $50.

If you make such a bold claim like twice performance, you have to prove it.

I knew from the start that 2x improvement claim was just marketing. It simply sounded unrealistic.

So I expect Big Navi to score some wins at least on compute tasks, and maybe some games optimized for AMD, and it is definitely not even close to "dead on arrival"

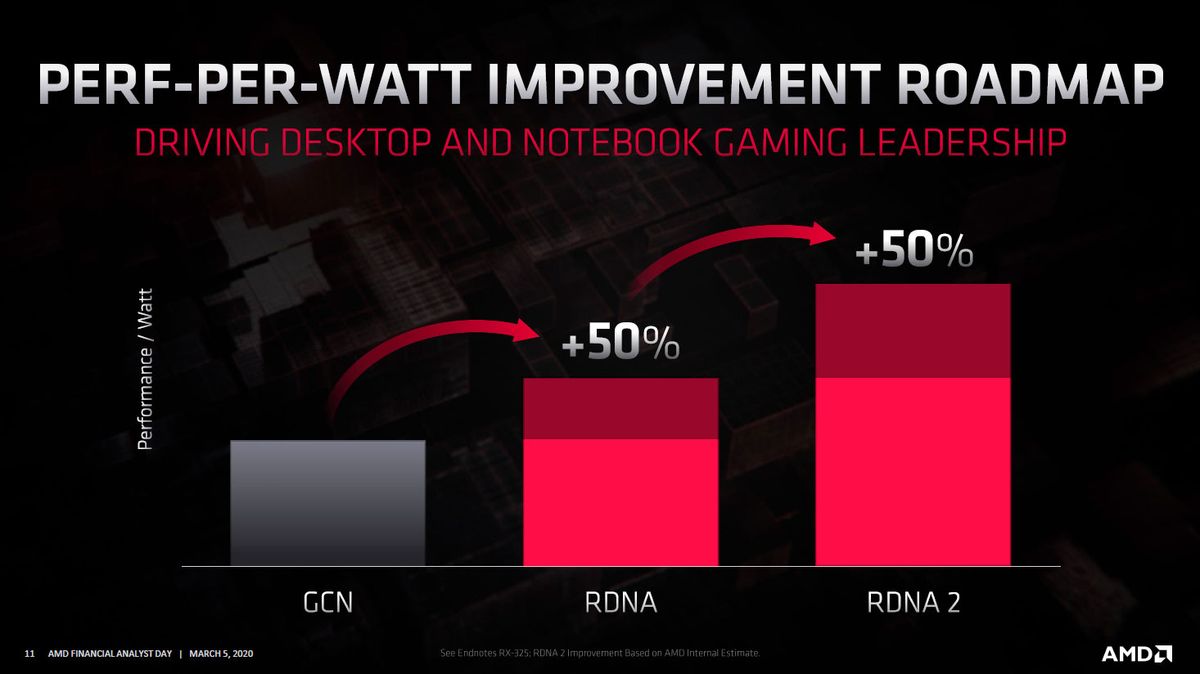

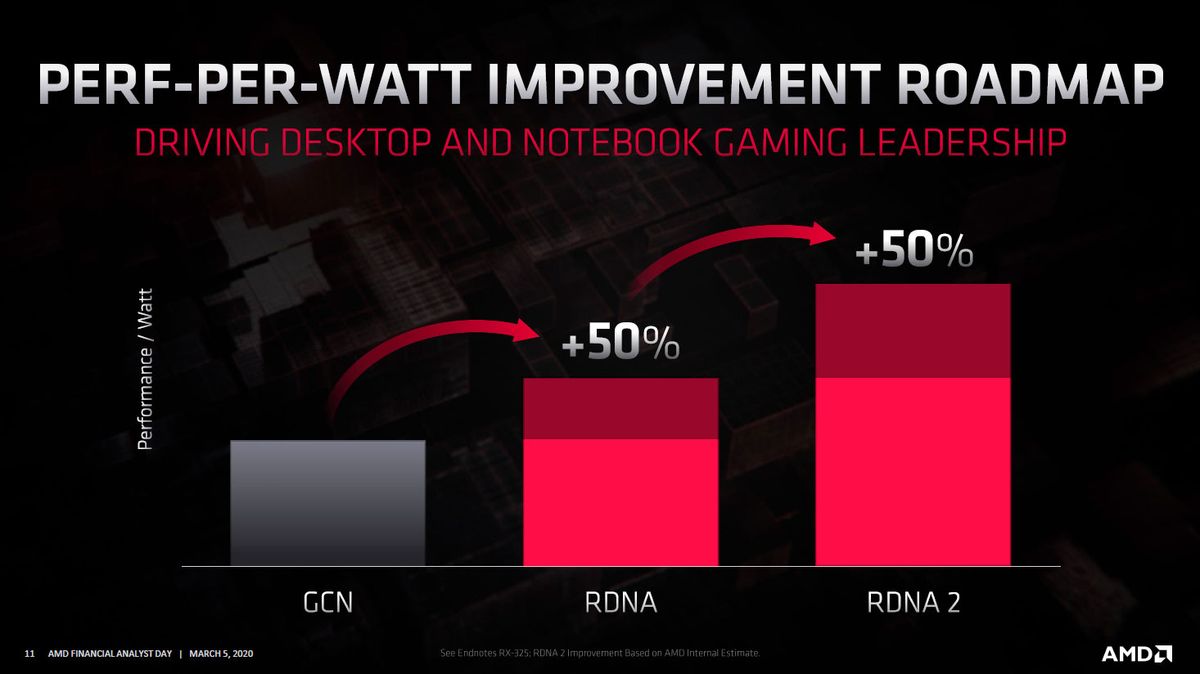

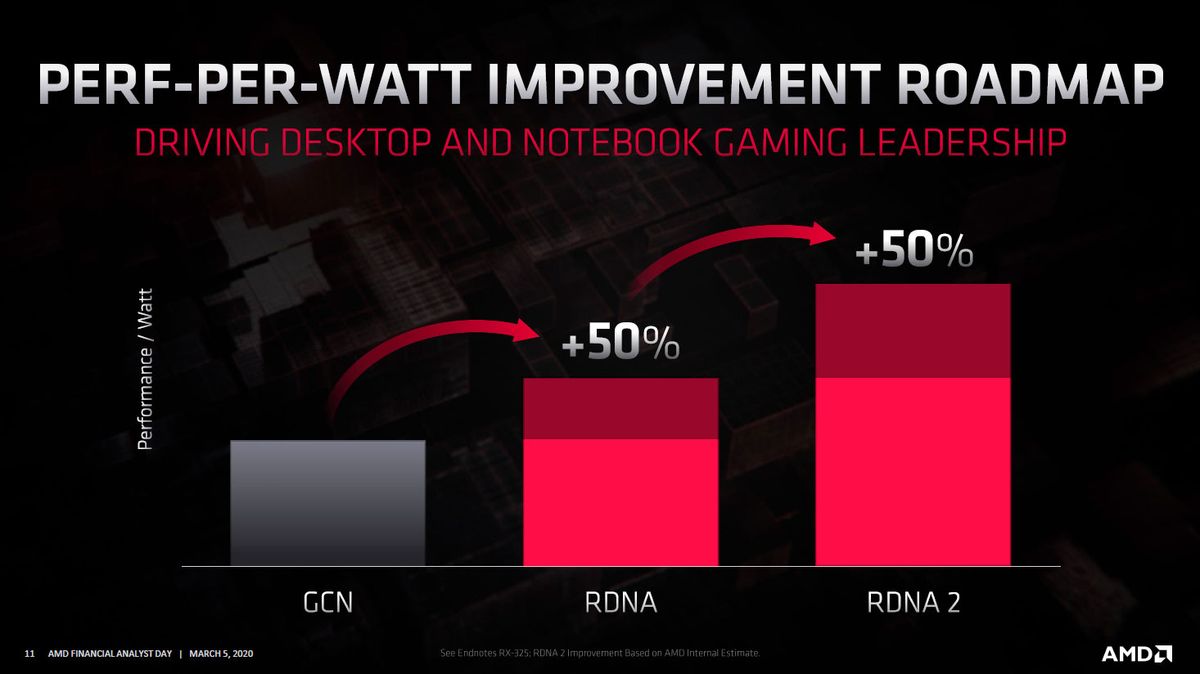

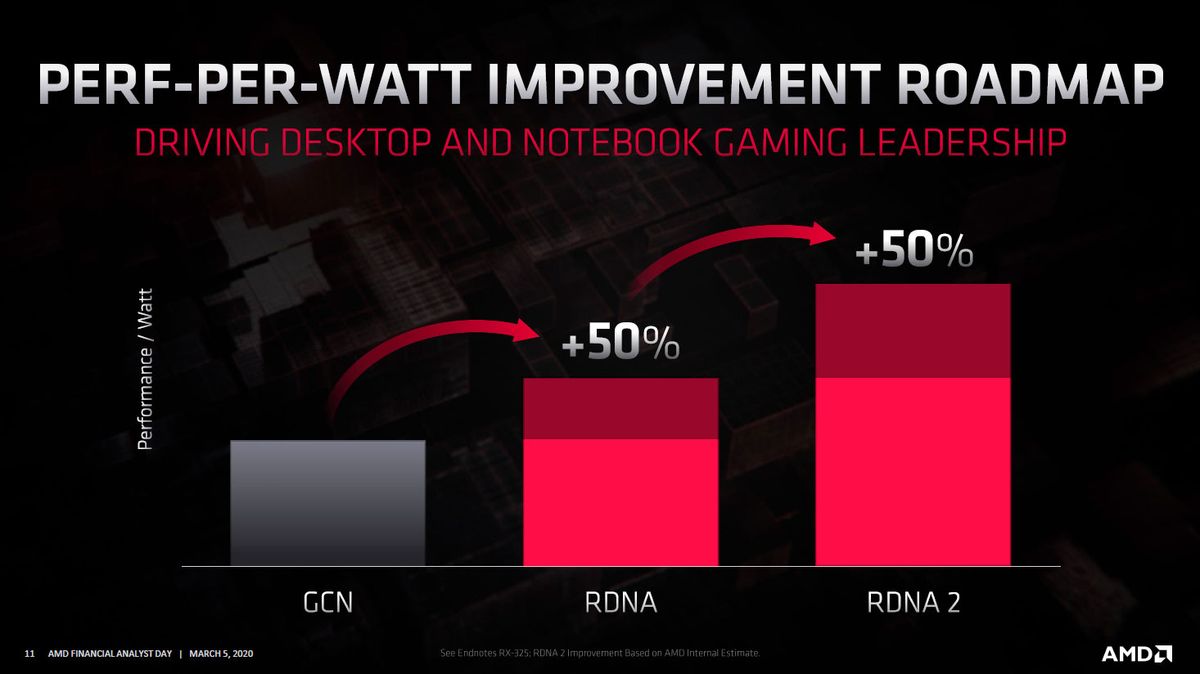

Regarding AMD, they gave projected improvements in their RDNA 2 slides and I don't expect it to be far off:

That's for performance per watt. What's not known yet, is how powerful their highest end card will be using that improvement and whatever amount of compute units they'll put in it. It could be quite a leap from 5700XT.

Last edited by Shmerl on 18 Sep 2020 at 1:46 am UTC

There are a couple of areas where I do care about having pieces that seem to not work all that great on Linux still (like video encode/decode), but pretty much a moot point with current heavily threaded CPUs and Ryzen is a blessing for such workloads, even older ones, though still nice to have little taxation on the CPU when encoding video and recording the screen, which I often do (not gaming, though).

Last round I wasn't compelled to get an RTX card, on the principle of the price pint they got and the fact that RTX was an unproven technology (still is for the most part) and what actually made use of those tensor cores (for consumers that is) had little to do with Ray Tracing at all (DLSS). Plus I game exclusively on Linux (and have been for the better part of the last 20 years now), and seems like Ray Tracing is only going to become to Linux if more vendors support it (be it AMD or Intel).

I do not feel compelled to get a 30 series card just yet, and this time around I am truly intrigued for what AMD has up their sleeve. I'm only hoping it won't be another Radon VII fiasco, but from what scarce tid bits that have been surfacing in the rumour mill, it will be very interesting to see.

Price aside, what intrigues me the most are the Linux numbers we can expect. We already know that (if Windows numbers are anything to go by) Ampere's performance on Linux for both native and Steam Play titles will be amazing and quite on par to the Windows numbers (if not even better on some titles) and overall performance on other tasks such as Blender/DaVinci Resolve most likely will follow suit. AMD on the other hand, depends on so many moving parts that I only hope all is in place or as close as possible to release date (speaking solely about Open Drivers, I'm sure they'll have their proprietary driver ready for select supported distros). Another thing that makes me curious is the performance delta we'll get in comparison to Windows and open drivers, IIRC last time phoronix had something like that it was quite narrow, and even in some tasks Linux performance pulled ahead (though I do not seem able find the article, seems Michael never released a RADV+ACO Vs Win10 comparison for Navi).

Typically we had to wait for some time for good support (was it Southern Islands cards or even older which went almost a year before proper support was in place?), like it was the case for some bits of the R5700XT. Though, being all in the open, we know issues will be ironed out. And even many (most?) tech outlets even use the open drivers to speculate what features will be implemented in the hardware ahead of release (or simply what hardware is in the pipeline like PCI ids, and other info). It certainly has been a fun time watching all the speculation around this release, only not much as to what to expect.

At any rate, this time around I am truy curious of how will Big Navi fare against current Navi plus nVidia, it is about time someone gave nVidia a run for their money!

I want to agree with you. But for people who dont care about nvidia vs AMD. For $700 in 1.5 months, AMD is not going to go roll out something noticeably faster than the 3080. They might roll something out at $700 but the same speed, or they might roll something out 20% faster than 3080... but costs more.

And at that point, you've just waited 1.5 months to get something that is roughly equal.

The reason it's sensible to wait isn't because you're necessarily expecting AMD to release something that will blow Ampere away.

I don't regret my purchase of a 2080 Ti in the slightest: I've had two years of excellent gaming performance, and I'll likely have several more. But if, at the time, AMD had anything to offer that was in the same ballpark, or even competitive with the 1080 Ti, it would likely have been a darn sight cheaper.

In a couple of months' time, if AMD can show that they're in the game, perhaps Nvidia will slash prices, or perhaps they'll release Ti versions. It's worth waiting even if you're planning to get an Nvidia card.

Thank you. This was *exactly* my point. :)

For $700 in 1.5 months, AMD is not going to go roll out something noticeably faster than the 3080.At $700, I don't care about +/- $50.

n.B. Now compare that to people arguing how 1 % Linux share (in Steam) must be still SO important to devs and Valve BECAUSE OF THE ABSOLUTE AMOUNT!

yep and its out of stock which should be no suprise to anyone.people are offloading them on ebay and making bank for sure.The cards that were showing at £649.99 on UK sites are now all over £700 on pre-order, so no surprise there.

compared the 3070 to the 2080ti, then compared the 3080 to the 2080 (non super). They primed your brain so when you hear "twice as fast" you think "twice as fast as the 2080ti"... Even though 25% faster than the 2080ti is exactly what their graph (shown in the article) roughly says.

interesting I didn't notice the 2080ti at first glance they put it like in furthest part of the graph another marketing technique here??

That is just a consequence of 2080ti's price. For the marketing trick you need to be at the other side of the graph. I'll leave it for you to discover; it's quite subtle.

You mean how the price axis starts at $200?compared the 3070 to the 2080ti, then compared the 3080 to the 2080 (non super). They primed your brain so when you hear "twice as fast" you think "twice as fast as the 2080ti"... Even though 25% faster than the 2080ti is exactly what their graph (shown in the article) roughly says.

interesting I didn't notice the 2080ti at first glance they put it like in furthest part of the graph another marketing technique here??

That is just a consequence of 2080ti's price. For the marketing trick you need to be at the other side of the graph. I'll leave it for you to discover; it's quite subtle.

That is just a consequence of 2080ti's price. For the marketing trick you need to be at the other side of the graph. I'll leave it for you to discover; it's quite subtle.

More over that: somehow people believe that Nvidia GPUs prices were reduced... while the true is that they just kept the same price per tier.

If you make such a bold claim like twice performance, you have to prove it.

I knew from the start that 2x improvement claim was just marketing. It simply sounded unrealistic.

So I expect Big Navi to score some wins at least on compute tasks, and maybe some games optimized for AMD, and it is definitely not even close to "dead on arrival"

Regarding AMD, they gave projected improvements in their RDNA 2 slides and I don't expect it to be far off:

That's for performance per watt. What's not known yet, is how powerful their highest end card will be using that improvement and whatever amount of compute units they'll put in it. It could be quite a leap from 5700XT.

Question:

The RX 5600 xt supports Radeon Rays (AMD raytracing)... What's the state of it, on Linux?

Last edited by Mohandevir on 18 Sep 2020 at 5:31 pm UTC

If you make such a bold claim like twice performance, you have to prove it.

I knew from the start that 2x improvement claim was just marketing. It simply sounded unrealistic.

So I expect Big Navi to score some wins at least on compute tasks, and maybe some games optimized for AMD, and it is definitely not even close to "dead on arrival"

Regarding AMD, they gave projected improvements in their RDNA 2 slides and I don't expect it to be far off:

That's for performance per watt. What's not known yet, is how powerful their highest end card will be using that improvement and whatever amount of compute units they'll put in it. It could be quite a leap from 5700XT.

Question:

The RX 5600 xt supports Radeon Rays (AMD raytracing)... What's the state of it, on Linux?

No it don't, not on a hardware level.

If you make such a bold claim like twice performance, you have to prove it.

I knew from the start that 2x improvement claim was just marketing. It simply sounded unrealistic.

So I expect Big Navi to score some wins at least on compute tasks, and maybe some games optimized for AMD, and it is definitely not even close to "dead on arrival"

Regarding AMD, they gave projected improvements in their RDNA 2 slides and I don't expect it to be far off:

That's for performance per watt. What's not known yet, is how powerful their highest end card will be using that improvement and whatever amount of compute units they'll put in it. It could be quite a leap from 5700XT.

Question:

The RX 5600 xt supports Radeon Rays (AMD raytracing)... What's the state of it, on Linux?

No it don't, not on a hardware level.

Aaaaah! Radeon Rays Audio... What the...?!

Ok! Sorry then.

Last edited by Mohandevir on 18 Sep 2020 at 5:45 pm UTC

How to set, change and reset your SteamOS / Steam Deck desktop sudo password

How to set, change and reset your SteamOS / Steam Deck desktop sudo password How to set up Decky Loader on Steam Deck / SteamOS for easy plugins

How to set up Decky Loader on Steam Deck / SteamOS for easy plugins

See more from me